NegotiationAcad: a 3-agent voice-coached training platform, now in pilot with INSEAD's negotiation faculty

Negotiation is a skill that degrades without practice — and business schools have no scalable way to provide it

The INSEAD Negotiation course is one of the most valued in the MBA curriculum. But like every negotiation course at any top school, it has a fundamental constraint: the only way to practice is with another human in the room. Role-plays happen twice a semester. There's no feedback loop between sessions. Frameworks get taught, but the muscle memory doesn't form.

I was sitting in one of those sessions and thought: the limiting resource here isn't the framework — it's the practice partner. An AI system with the right architecture could be an on-demand practice partner that never gets tired, gives structured feedback, and actually adapts to each learner's weak spots.

The hard part isn't the AI part. It's building a voice interface with low enough latency to feel like a real negotiation, and a coaching agent that gives feedback specific enough to be actionable rather than generic praise.

Three agents, one conversation — each with a distinct and non-overlapping job

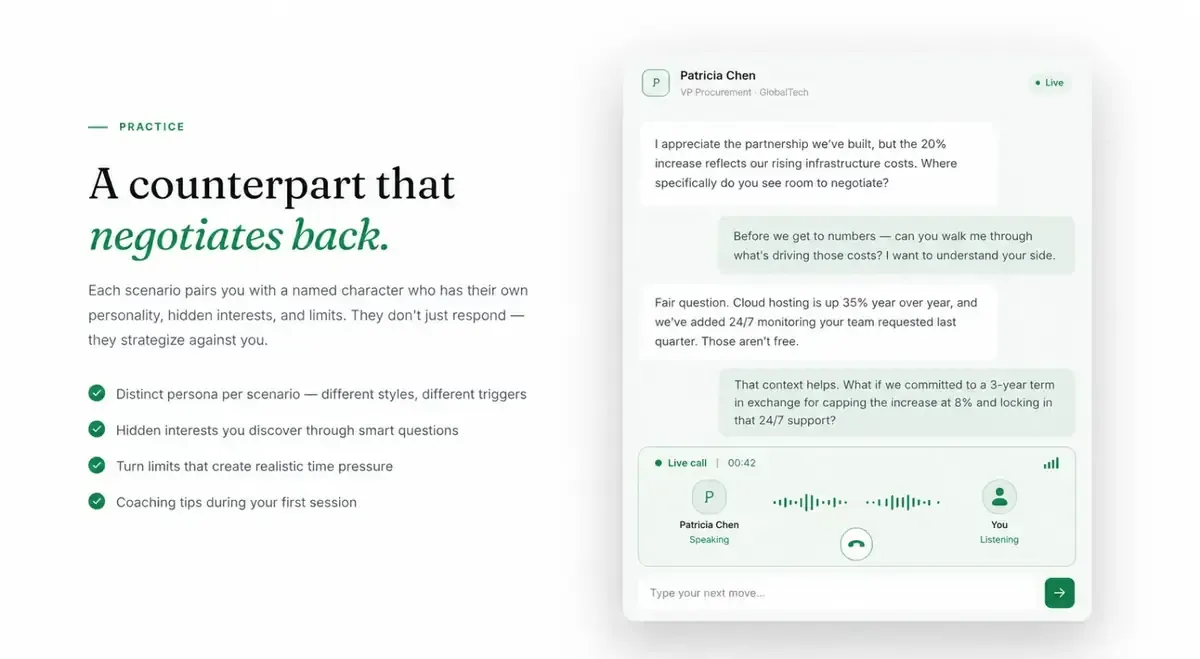

A single agent that's simultaneously playing a hardball negotiator and giving metacognitive coaching feedback produces incoherent behavior. The role-play breaks every time the agent slips into coaching mode mid-conversation. The architecture I settled on separates these concerns completely:

The Scenario Agent pulls from a pgvector knowledge base of INSEAD frameworks (BATNA, ZOPA, principled negotiation, reciprocity tactics). The Counterpart Agent receives only the scenario and conversation history — no access to the coaching layer, which is what keeps it in character. The Coach Agent sees everything and fires post-turn against a structured rubric.

Sub-400ms felt like a human pause. 600ms felt like lag. That 200ms window was the product.

The Counterpart Agent needed to be voice-first to feel like a real negotiation. Real negotiations have pregnant pauses, interruptions, and emotional tempo changes — none of which work with a half-second processing lag.